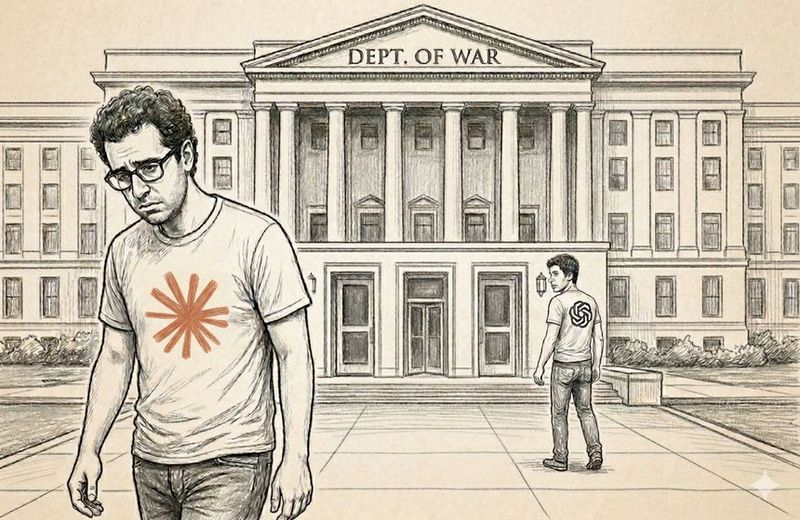

The Compulsion Paradox and the Pentagon-Anthropic Spat: When "Deregulation" Means Dictating AI Design

This is the second installment in our series examining the Pentagon-Anthropic standoff and its implications for AI governance. Part 1 traces the historical pattern of government-technology confrontations from the Clipper Chip to Claude. This post examines what happens when a deregulatory administration reaches for a Korean War-era compulsion statute to tell an AI company how to build its product.

The Trump Administration promised to unleash American AI by cutting regulation. But it attempted what is likely the most expansive act of government AI regulation in United States history when it threatened to invoke the Defense Production Act to compel a private company to redesign the safety architecture of its product. And when that company refused, the government designated it a national security supply-chain risk under 10 U.S.C. § 3252. The tool wasn't a notice-and-comment rulemaking or a congressional mandate. It was a 72-hour ultimatum backed by the threat of DPA compulsion and, ultimately, a procurement blacklist.

That is the paradox at the center of the Pentagon-Anthropic standoff, and it raises a question that extends well beyond defense procurement: when government compulsion authority meets private AI safety architecture, which one gives?

Two Principles in Genuine Tension

Both sides rest on principles that deserve serious engagement.

The Pentagon's argument is not devoid of substance. Private companies are not normally the arbiters of what the United States military may lawfully do. Democratic accountability for military conduct runs through Congress, the courts, and the executive chain of command — not through AI vendor contracts. When a Pentagon official stated, "We do have to be prepared for what China is doing," the underlying concern was not trivial. In a geopolitical environment where adversaries operate without equivalent self-imposed constraints, the argument that safety restrictions disadvantage the United States in AI-enabled competition is a genuine policy debate.

Anthropic's position is equally grounded. Its CEO Dario Amodei's February 24 statement wasn't a blanket objection to military AI. He endorsed AI for "lawful foreign intelligence and counterintelligence missions" and praised partially autonomous weapons like those used in Ukraine as "vital to the defense of democracy." His two objections were narrow: (1) mass warrantless surveillance of Americans, and (2) fully autonomous weapons deployed without adequate human oversight.

Indeed, Anthropic had already developed “Claude Gov” — a version of its model specifically fine-tuned for national security applications with fewer restrictions than civilian Claude. Its government addendum permitted uses prohibited for civilian customers, including analysis of lawfully collected foreign intelligence. The two bright lines were all that remained in dispute.

Neither Anthropic's position nor the Pentagon's is inherently unreasonable. The question is how the collision resolves.

What "AI Safety" Actually Means Here

The debate is muddied by the term "safety guardrails," which sounds like bureaucratic caution. It is not. In frontier AI systems like Claude and ChatGPT, safety is architectural — built into the model itself through techniques such as reinforcement learning from human feedback (RLHF) and what Anthropic calls "Constitutional AI," in which the model is trained against a set of principles governing its behavior.

These are not content filters bolted onto a finished product. They shape how the model reasons, what it will and will not generate, and how it responds to adversarial prompts. Removing them does not produce the same system minus restrictions. It produces a fundamentally different system — one whose behavior in high-stakes environments is unpredictable in ways that matter when the outputs involve targeting decisions or surveillance actions.

This is Amodei's technical argument distilled: "Frontier AI systems are simply not reliable enough to power fully autonomous weapons." This is an engineering-grade risk assessment. As we discussed previously, the difference between AI governance theater and real controls is whether safety mechanisms actually constrain behavior under pressure. Architectural safety does. Contractual language, standing alone, often does not — a point the Grok-Pentagon episode we examined in Part 1 illustrates vividly.

The Compulsion Paradox

Here is an irony that has largely escaped commentary: invoking the Defense Production Act — and then using a procurement blacklist — to compel an AI company to strip its safety architecture is itself an extraordinary act of technology regulation.

The DPA's Title I allocation authority (50 U.S.C. § 4511) has been largely untested since the Korean War. The supply-chain-risk designation under 10 U.S.C. § 3252 — the tool the Pentagon actually used — was designed to address foreign adversary infiltration of the defense industrial base, not to punish a domestic company for its AI safety policies.

Compelling a company to provide a product is one thing. Compelling a company to modify the safety architecture of its product — removing features the company considers essential to responsible operation — is quite another. The distinction between “sell us your AI” and “sell us your AI without the controls you built into it” raises questions under the Youngstown framework for executive authority and potentially the First Amendment, given that an AI model's trained values arguably constitute expressive choices.

A former Pentagon official remarked that Secretary Hegseth's rationale for blacklisting was "extremely weak." Likewise, Dean Ball, formerly a policy adviser on AI for the Trump White House and the primary drafter of the administration's AI Action Plan, called the supply chain designation "the destruction of a company for its political and philosophical orientation." Ball did not dispute that the government could decline to contract with Anthropic or even insist on removing Claude from its supply chain. What he objected to was the leap from contract cancellation to existential punishment. Coming from the person who helped write the administration's deregulatory AI agenda, the characterization is difficult to dismiss as partisan over-reading.

The weakness of the rationale became more difficult to ignore within hours. The same day the Pentagon designated Anthropic a supply-chain risk, OpenAI CEO Sam Altman announced on X that his company had finalized its own Pentagon agreement, all the while claiming to have held fast on the same two principles that got Anthropic blacklisted. "Two of our most important safety principles," Altman wrote, "are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems."

The Pentagon accepted those terms from OpenAI. It blacklisted Anthropic for demanding them.

The Pentagon's recent rhetoric did not help clarify the distinction, with its representative stating that it “will always come to the table for reasonable discussion as we did with OpenAI. Anthropic didn't want to do that, because they have their own personal vendettas.” The Pentagon did not explain how a company's objection to mass warrantless surveillance of Americans and AI-aided, fully autonomous weapons constitutes a personal vendetta.

For observers following this administration's stated commitment to reducing AI regulatory burdens — a posture we analyzed when we examined Executive Order 14365 (“Ensuring a

National Policy Framework for Artificial Intelligence") and the proposed TRUMP AMERICA AI Act — the Pentagon's approach creates an uncomfortable tension. An administration that positions itself as deregulatory is deploying one of the most coercive tools in the federal procurement arsenal to dictate the terms of AI system design. The mechanism differs from prescriptive rulemaking. The effect is the same.

Same Guardrails, Different Architecture

How OpenAI finalized its Pentagon agreement within hours of Anthropic's designation deserves attention, because (on its face, at least) the sequence undermines the framing that Anthropic's position was operationally unworkable.

Rather than seeking contractual prohibitions, OpenAI built similar, if not equivalent, protections into its technical architecture and negotiated for its own personnel to work alongside government staff in classified environments. Anthropic was branded a national security threat for insisting on two safeguards. Hours later, its competitor secured a deal with those same safeguards baked in.

The question every AI vendor should be asking is whether the government's objection is to the safety guardrails themselves or to the vendor's insistence on maintaining independent control over them. That distinction carries very different implications for governance.

The Litigation

On March 9, Anthropic filed suit in the Northern District of California, naming 18 federal agencies and 17 officials as defendants. The complaint alleges First Amendment retaliation, ultra vires executive action under Youngstown, Fifth Amendment due process violations, and — notably — that the Presidential directive targeting Anthropic by name functions as an unlawful bill of attainder. A separate challenge under 41 U.S.C. § 4713 is expected in the D.C. Circuit.

Anthropic's lawsuit will test the supply-chain-risk statute, the DPA's compulsion authority, and multiple constitutional doctrines in a context their drafters never imagined. The resolution will establish precedent not only for defense procurement but for the broader question of whether the government can compel modification of AI safety architecture. The bill of attainder claim, in particular, asks whether the executive branch can single out a company by name for punishment based on its public advocacy — a question with implications well beyond AI.

In the end, the most consequential AI regulation in American history may be written not by regulators — but by whichever court decides whether “deregulation” includes the power to order a company to make its AI less safe.

____________________________________________________________________________________

In our next installment, we will examine what the Pentagon's demands mean for the domain where government AI power meets everyday life most directly: surveillance and predictive policing.

For questions about AI governance, government technology procurement, and vendor risk management, contact the Jones Walker Privacy, Data Strategy, and Artificial Intelligence team. And stay tuned (and subscribe) for continued insights from the AI Law and Policy Navigator.

"The divergence between what people are saying about A.I. and what is, in fact, happening has just never been wider." Dean Ball, Former Sr. AI Policy Advisor, Trump Administration